Blog May 28, 2019

Five Key Announcements from Google IO 2019

With Google IO 2019 in our rearview mirror, now is a good time to take a look back on what was delivered and determine the effects on your enterprise. Google IO goes beyond just mobile, with big announcements covering a broad range of technologies such as ML, AI, Web, Mobile, and Assistant. Google spent very little time covering releases in their Cloud platform this year, with most of those announcements being delivered just a few weeks prior to IO at Google Cloud Next.

In this post, we will take a look at a few of these key releases and how they will affect your enterprise iOS/Android applications.

Android Q

The next version of Android was a hot topic at Google IO. Though we do not know what Q stands for just yet, we are in the 3rd public beta and we know what most of the changes in Q will look like. Some of these changes have little to no affect on enterprise applications, while others require immediate attention to ensure compatibility.

Dark Mode

With Android Q comes Dark Mode, which not only applies to the System UI, but also to all applications on the device. When a user indicates that they want everything to be in Dark Mode, they expect it to take effect across all their applications. Google offers your app the ability to turn on "force" Dark Mode, which simply means the OS converts your colors on your behalf. This allows you to adopt to Dark Mode quickly with little overhead. Alternatively, you can implement your own Dark Mode theming design. It is strongly suggested that all apps choose a route that is right for them so that Dark Mode users do not have a different experience just with your app. Check out a post from some of my colleagues on building Dark Mode out themselves with both of these options.

Gesture Navigation

Android Q now offers the ability to remove all navigation from the bottom of the screen giving the user an immersive experience. This means that there will be no native back or home button when a user has this navigation style enabled. The user will instead rely on gestures such as edge swipes and flings to navigate within and in between apps. For app developers, this means that some features which rely on edge swipes and gestures may cause the user to have unexpected behaviors. It is highly encouraged that apps with this type of navigation take a look into alternatives or implement code that allows the user to have safe zones where your app can handle the gestures.

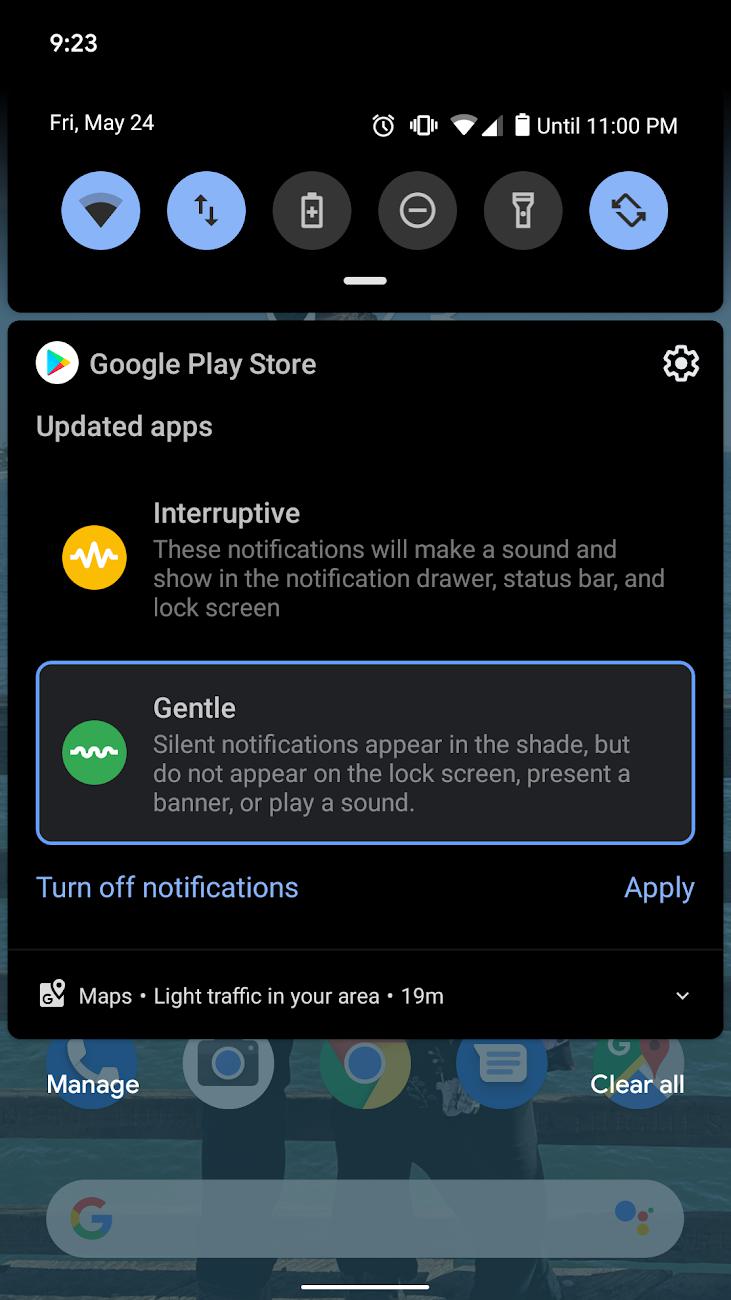

Notifications

Notifications within Android Q will attempt the issue of notification priority that has long plagued the Android platform. In the past, it was up to the app developers to indicate whether the notification they are presenting to the user is of high priority or not. However, high priority soon became the default for nearly every app developer and when everything is high priority than nothing is truly has priority. Android Q notifications will allow users to indicate the priority of future notifications so that they are only interrupted for the notifications they have indicated are important to them. Users will be able to swipe on their notification and adjust the priority of that type of notification with a simple tap of "gentle" or "interruptive". This means its even more important to get your notifications' priority and channels correct to ensure your notifications get seen by your users.

Location Permissions

With privacy being a key focus for Google this year, it was important that Google took a hard look at location permissions on Android. Currently, when the user grants an application location rights, there is no governance around when the application may retrieve the user's location. This means the user could not have opened the app for several days and the application could be using the user's location from the background. With Android Q, Google is following the iOS paradigm of giving the user the ability to only let their location be known to apps when the app is in the foreground. It will be very important for applications that use the location permission today to adjust their logic to ensure they are appropriately handling the new permission status.

Machine Learning

Consistent with the last couple of years, Google had a major focus again on Machine Learning and broadening its reach to all developers. They have done this primarily by operating between two platforms - Firebase and ML Kit. Both of these platforms are built to support both iOS and Android for consistent cross-platform experiences. This year, advancements were made on both of these platforms to not only make it easier for developers to implement, but to also make it easier for lower-end devices with poor network connectivity to take advantage of these features.

On Device Translation

Google has had great support for translating languages for a while now. However, these features required network connection and of course that meant latency. This year, Google announced a breakthrough in their translation models that allow language models to run on device with a download size of ~ 30Mb per language. This is available for free for both iOS and Android today, and it certainly opens up the doors for multi-language support within your application.

Cloud ML & ML Vision Edge

Google understands that while Machine Learning is growing, the science and technology stack behind it can very difficult, to say the least. To help make ML more approachable they have focused on Cloud ML, which allows developers (or anyone for that matter) to train models without ever writing a line of code. Furthermore, with ML Vision Edge, models can be trained for image classification and then be downloaded and ran on device all without leaving your browser. Check out a post from some of my colleagues on building their own model via Cloud ML Vision Edge.

Plus, if you use the new CameraX found in Android Jetpack, there are some nice integrations that allow these models to be easily added to your camera implementation.

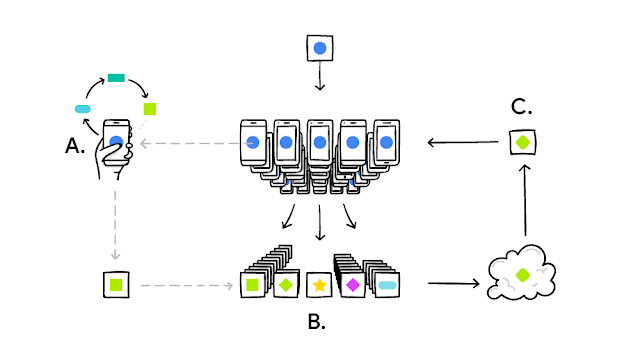

Federated Learning

With the growth of ML comes the growth of data collection. Google had a big focus on privacy this year, with big points being made in nearly every session on ensuring users' privacy is maintained. To achieve this, Google introduced the concept of Federated Learning. Federated Learning, essentially allows on device ML Models to be trained locally with the user's data and then the model changes can be pushed to the cloud based model without the user's data ever being sent off device. Furthermore, with this technology, models can be trained in such a way that helps reduce bias. Google uses this concept today for their GBoard, which allows their keyboard application to learn new words without knowing what the user is typing.

Augmented Reality

Google continues to make advancements to their ARCore Platform for Android. Last year, Google announced Sceneform, which allowed non-Unity developers to have a more approachable experience with AR on Android. This year, Google has added to their toolkit with Augmented Faces & advancements to Augmented Images.

Augmented Faces

This year, Google introduced Augmented Faces as a part of ARCore for Android. With this announcement, developers can more easily add Augmented Reality experiences overtop a user's face via their device's camera. In the image above, we are demoing adding some texture and an image to the user's face. For more information, see a post from myself and a colleague on how to get started with Augmented Faces today with under 300 lines of code.

Augmented Image Advancements

Augmented Images was released last year as a part of Sceneform for ARCore. Augmented Images allows the user to essentially "scan" a 2D image and allow your application to add a 3D augmented reality experience to it. Google enhanced this capability which allows tracked images to remain tracked in the "physical" world even when the camera is no longer pointing at the image. This opens many doors and possibilities by using a specific image as an anchor and allowing the application to place AR experiences all around you. Furthermore, Google now allows these tracked images to move so that the AR experience can move with it and not get lost or have to be rescanned. For more information, see a post from myself and a colleague demonstrating these advancements.

Jetpack

At Google IO 2018, Google introduced Android Jetpack - an opinionated suite of libraries and tools built to help developers deliver high-quality apps easier and with less boilerplate. The Android Community promptly adopted Jetpack into their applications with a high level of enthusiasm. This year, Google has added more support for common pain points in Android Development. These advancements will not only make developers happier, but will allow more complex features to be easier to attain.

CameraX

Camera development of any kind within Android has been a common pain point for many years now. The Camera and Camera2 (for Lollipop and newer devices) APIs have long been a major pain point for Android developers around the world. Ofte times, this meant having to hack together device/manufacturer specific fixes to get correct camera functionality on all (or as many as feasible) devices. CameraX addresses these issues by creating a simplified API for handling common camera functionality. Furthermore, CameraX also includes support for running image analysis with Machine Learning to perform tasks such as Text Recognition. For more information, Check out a post from some of my colleagues on the topic.

Security

If you watch the news, then you know that security of your users' data is of the utmost importance. However, the APIs for encrypting data are not only difficult, but time consuming to write and understand. Furthermore, unless you happen to be a security expert its hard to know if the security you do have is sufficient. To address this issue, Google released Jetpack Security which provides an implementation of the security best practices related to reading and writing data, as well as key creation and verification. Adopting such a framework will not only ensure that your data is securely encrypted, but that it is done so efficiently and without the need for a lot of boilerplate code.

Coroutines

Kotlin Coroutines has been quickly gaining steam within the Android Community. Coroutines are a new way of managing background threads that can simplify code by reducing the need for callbacks. Coroutines are a Kotlin feature that convert async callbacks for long-running tasks, such as database or network access, into sequential code. However, until recently, using Coroutines for many of the libraries within Jetpack would simply not work. Google has not only added Coroutine support to Jetpack, but they have officially made Coroutines their recommendation for thread management.

Play Store

The Google Play Store, where Android users go today to download applications, has made some great strides over the past few years to increase security, reduce app size, and increase app developers understanding of their application's analytics. This year at Google IO was no different, with great advancements being made in all of these categories and more.

In-App Updates

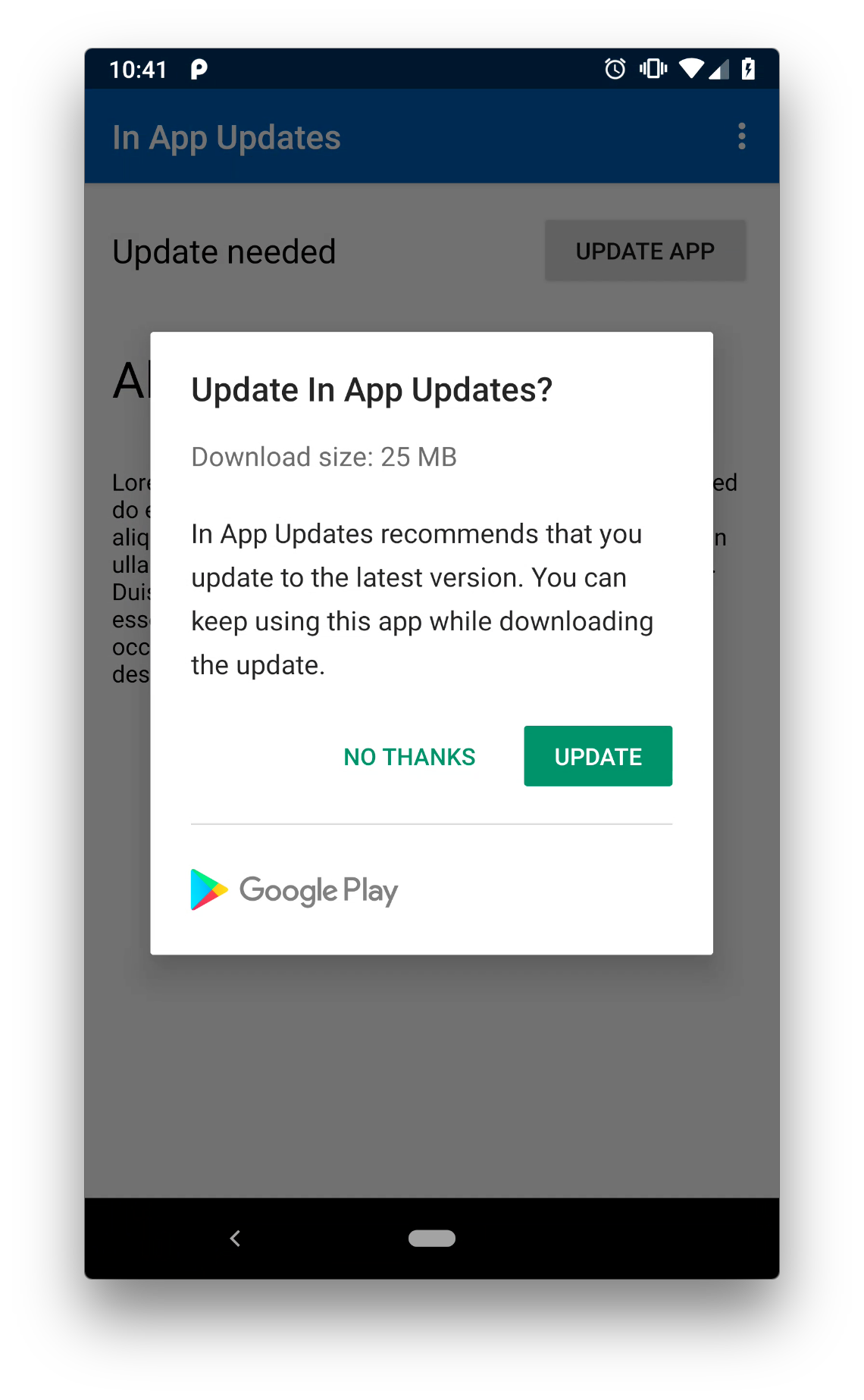

Delivering timely updates to users is key for the success and adoption of new features. However, within mobile that means application developers are dependent upon auto-updates or the user manually checking and updating their applications. As a result, many applications have built features that block app usage in the event that a "force update" is required, or users are presented a prompt that states an update is available and kindly suggests that the user upgrades. However, both of these scenarios are poor user experiences and interrupt the user's workflow which could mean the loss of conversions.

As a result, Google has introduced In-App updates to address these problems. Not only do In-App Updates provide their own "force update" feature, they also creates a flexible option that allows the user to download and choose when to install the update to prevent the disruption of the user's flow. For example, user's can see that an update is available, indicate that they want to download it but wait until their app is closed before the update is installed. This allows the user to complete their flow while letting the update work behind the scenes without user disruption.

Play Store Ratings

App ratings have historically been calculated differently between Android and iOS. iOS App Store ratings are calculated off of your latest app version, while Android provides the overall lifetime average rating. Each system has their own pros and cons, however, within Android this means that if your app had a bumpy start its really hard to recover even after you fix the source of the complaints. To help address this, Google is adjusting how the Play Store ratings are calculated by giving a weighted priority to newer release versions. This can work in your favor if you are improving, but can cause a decline in your rating a bit faster if your reviews begin to regress.

Dynamic Modules Exits Beta

Dynamic Feature Modules were released 2018, but only to a small subset of beta developers. This year, Google has opened up Dynamic Feature Modules to all applications. This means that you can delivery entire feature subsets to users either on-demand, conditionally, or a combo of both. The real perk of this is that it can significantly reduce the download size of your application and allow you to only ship features to maybe a subset of people or to user's based on their app usage, locale, device type, or more.

Closing

The aforementioned highlights were just a few of the many announcements Google released at IO. With Android Q releasing later this year, it is highly recommended that you take some time to ensure compatibility with the latest OS changes. As you adopt these new changes, it becomes increasingly important to lean on Google's recommendations and best practices found in Jetpack to ensure long lasting compatibility. Lastly, introducing ML or AR to your application has never been more in reach. I hope you take some time to find how AR/ML may solve some of your business needs.

Portions of this page are reproduced from work created and shared by the Android Open Source Project and used according to terms described in the Creative Commons 2.5 Attribution License.