Blog May 20, 2019

Google Wants to Democratize Machine Learning

At IO this year, Sundar Pichai spoke about the common thread connecting Google's latest machine learning initiatives: democratization. Google envisions their ML suite becoming the premier platform for developers to solve technical challenges — all without needing a data science background to use it.

Their beta release of Cloud AutoML aims to let users "train high-quality custom machine learning models with minimal effort and machine learning expertise." This toolkit processes images, video, structured data, and natural/translated language, and even assists with training, testing, and deploying your models. No longer must developers download unfamiliar command line tools and lumber through model configuration, then watch their desktops glow orange from hours of nonstop training.

Google claims that minimal coding is required to use their AutoML tools, and even provides fresh UIs to interact with them. Many enterprises are eager to explore machine learning, and Google's recent strides towards simplifying it makes adoption easier than ever.

To test out their AutoML Vision tools, we grabbed some contraband laying around the office and set out to build a simple, vision-based candy detector.

Your browser does not support the video tag.

Project Setup

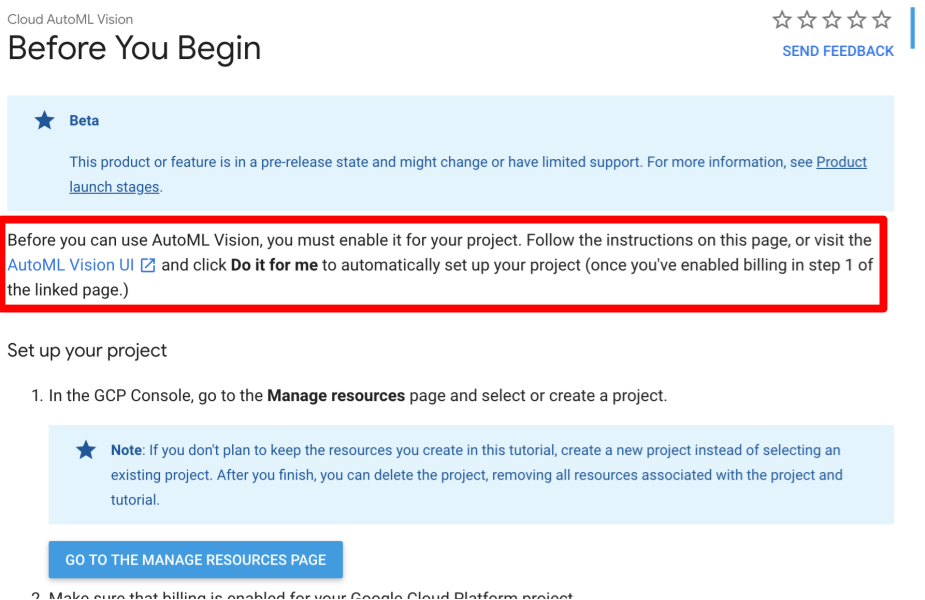

While AutoML's UI works well, accessing it requires some project configuration. Before it'll even open, you'll need to establish a billing-enabled cloud project, authorize a few permissions, provision a service account, grant your accounts necessary authorization, and establish a Google Cloud Storage bucket. Over the years, Google's project console has become notoriously hard to navigate, so managing all these items is a chore.

Luckily, Google provides a "do it for me" option in their AutoML UI that conducts much of this setup on your behalf. This is a handy feature that we glanced over initially — it's only accessible after you've created a billing-enabled project. (Annotated screenshot below.)

Training

Gathering Training Data

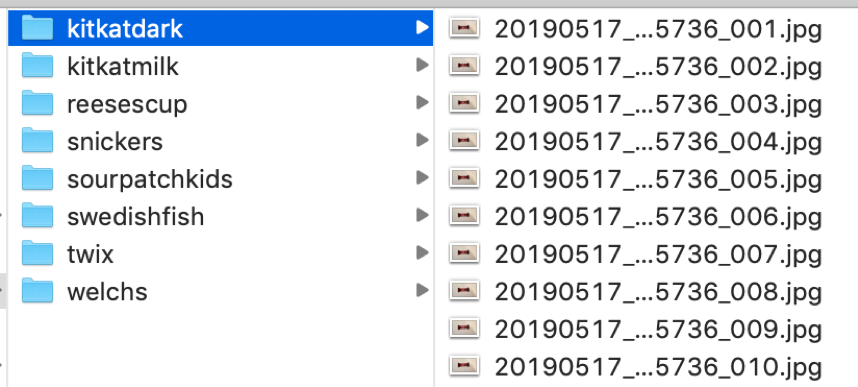

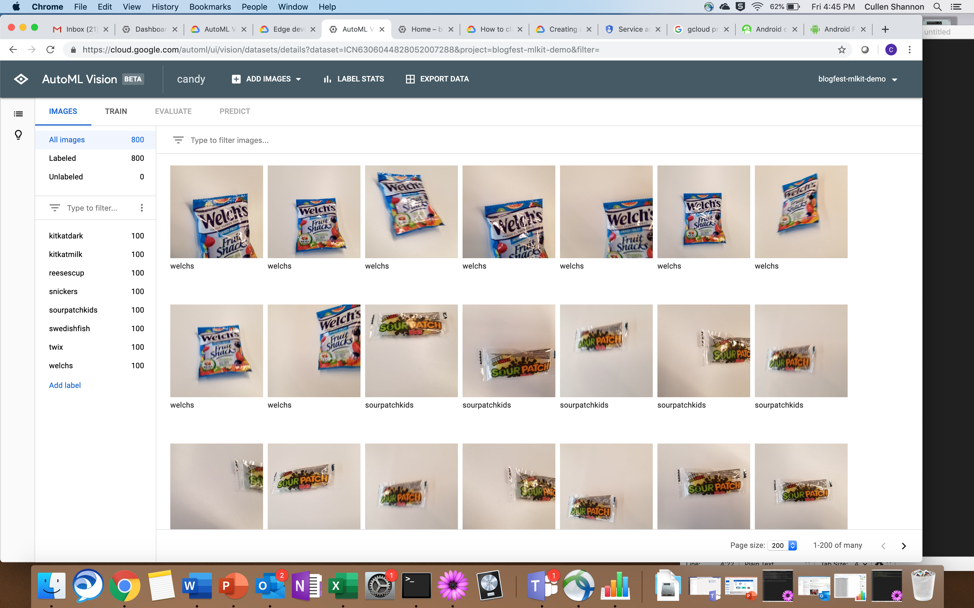

AutoML requires at least 100 images of each classification you intend to train. We trained on eight different candies, using 800 images total. That sounds like a lot, but the camera's burst mode is perfect for this — we captured all our training data in about a minute.

I call this look "Blue Steele"

Loading the Images

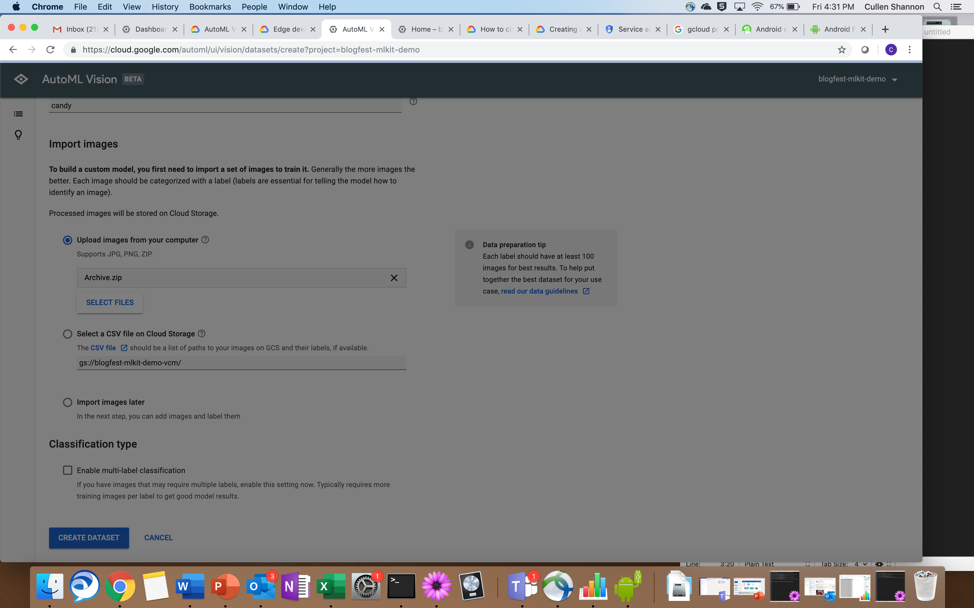

To upload training data, AutoML accepts images and zip files. With zipping, you can place your images in subdirectories to ensure they get labeled properly when AutoML imports them. This spares you from having to label the images individually.

Creating a Dataset

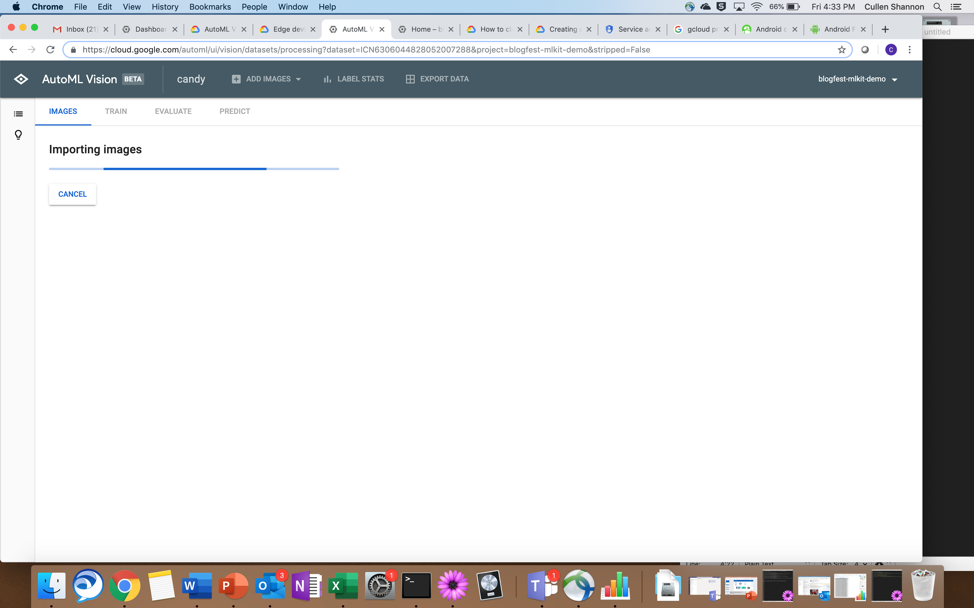

AutoML walks you through the required fields to create a dataset: giving it a name, a data source (we used a zip archive), and a classification time. During upload, our screen hung for over two minutes as it uploaded our 800mb payload. (A progress modal would've been nice.)

Eventually, we received a new screen:

Then we were able to view our training data in the console.

Training the Model

Since we uploaded a zip file with a proper hierarchy, our labels were preloaded for us. We tested changing a few labels around and found them easy to modify. Now with all our training data loaded and labeled, the model was ready for training.

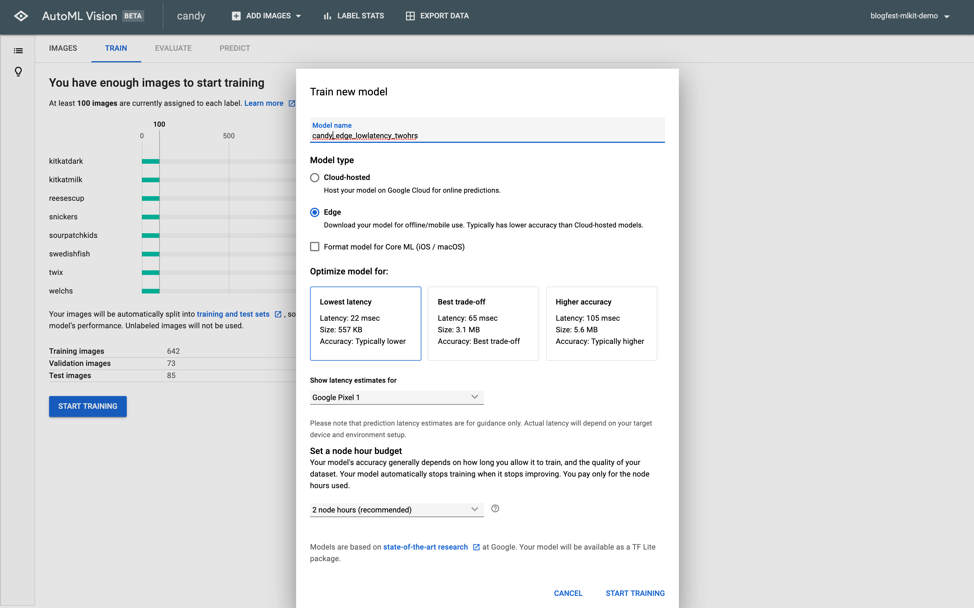

Google provides a helpful dialog to assist with model configuration. These selections deserve close attention, as the resulting model can take hours to build. Here, you can specify whether you want the model type as Cloud-hosted or Edge; we selected Edge to target an offline-capable Android device.

Three Edge options were provided for us, balancing model size, accuracy, and latency. We stuck with the lowest latency.

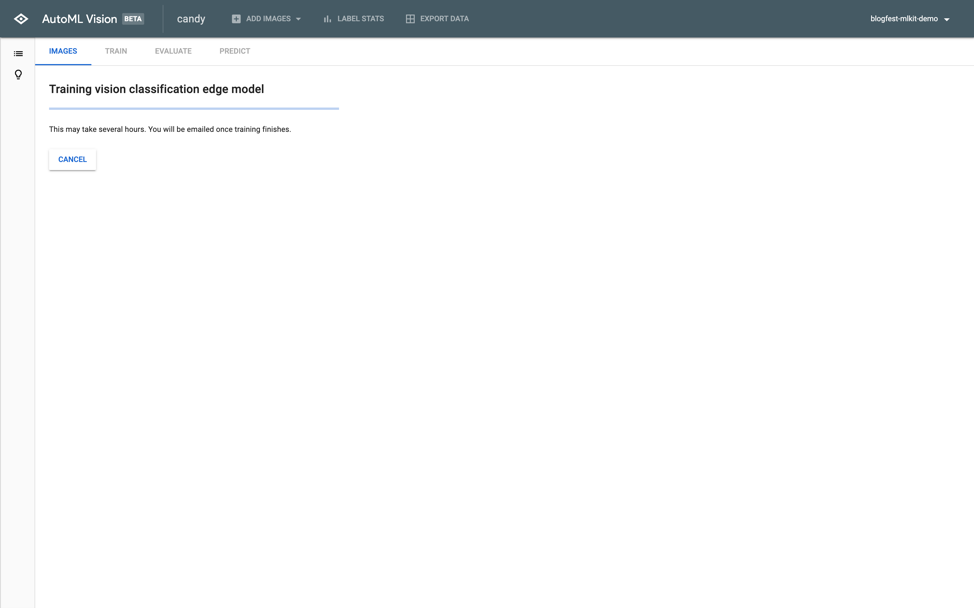

Once we proceeded, Google notified us that our training had begun (both in the window and via email). It told us it may take "several hours" without providing any more specificity.

Some ping pong as we waited...

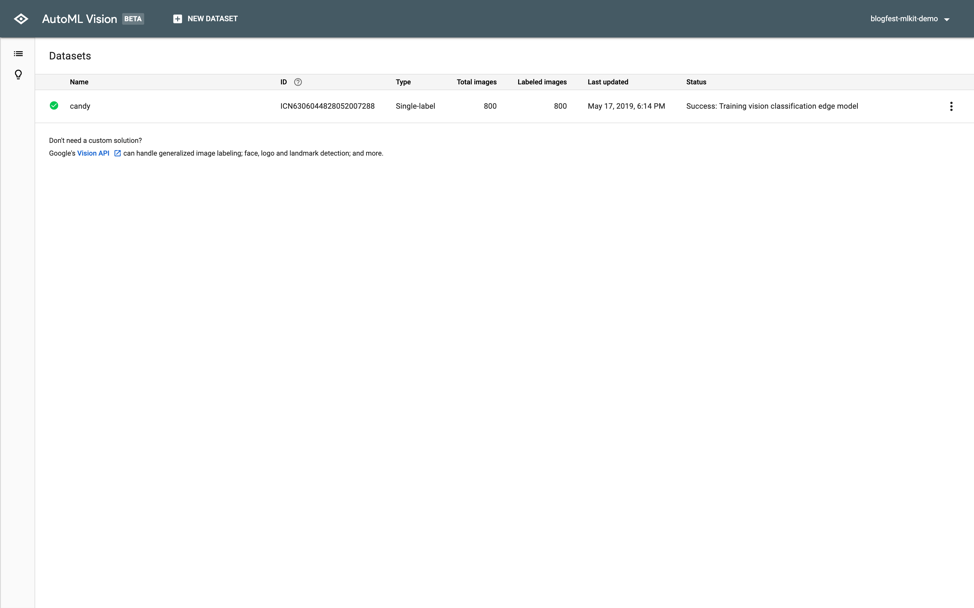

We returned to a finished model. With 800 images, 8 classifications, and 800mbs of total training data, it completed in under two hours. Not bad!

Testing the Model

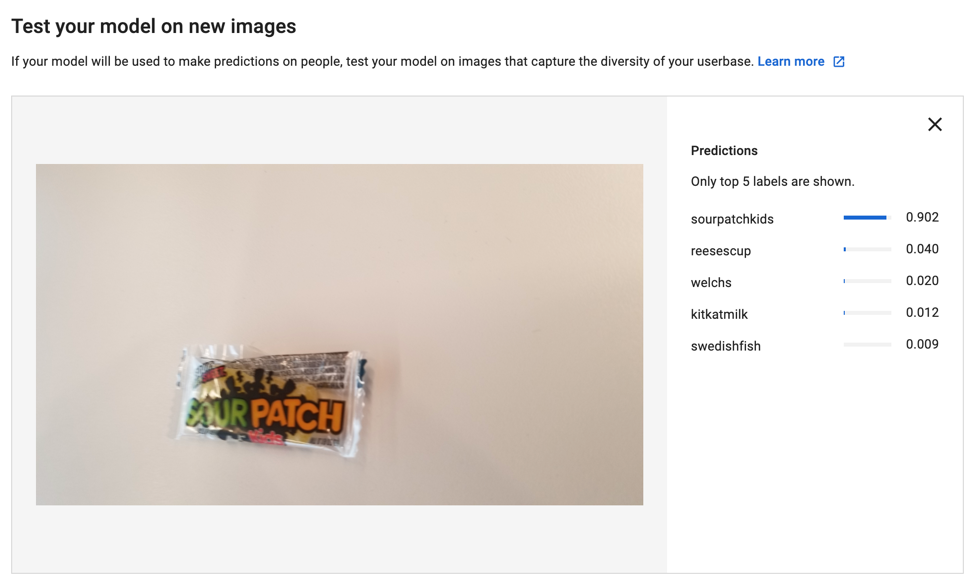

AutoML provides a nice feature that lets you test new images directly in the UI. We tested a few images of different candies, and the new model worked as expected.

Success! And we didn't have to export any models to try it out! In less than four hours, we whipped up a machine learning model from 800mb and generated meaningful data!

Tossing it into Android

We configured our model to support Android, but it could've easily supported iOS or web instead. AutoML allows you to export the resulting model files (tflite in our case) to suit your desired implementation.

We used TensorFlow's example in GitHub and tweaked it use our model, label, and branding scheme. A few minutes later, we had a functional demo!

Your browser does not support the video tag.

Conclusion

Though not fully polished, AutoML Vision delivers on Google's ambition to simplify machine learning and artificial intelligence. It's simple, powerful, and requires little data science knowledge to use. Now, developers can transform vague ML concepts into rapid prototypes, build and train custom models, and help guide enterprises towards ML-driven enhancements.

Authors

Cullen Shannon is a Mobility Manager for CapTech, based out of the Reston, VA office. With more than 15 years of Fortune 500 experience, Cullen is passionate about using modern technologies to help big enterprises stay nimble and competitive.

Parthiv is a Consultant based out of Columbus with enterprise experience across Android and other platforms. His personal interests include cybersecurity and virtual reality development.